Handheld paradigms offer an efficient and intuitive way for collecting large-scale demonstrations of robot manipulation. However, achieving contact-rich bimanual manipulation through these methods remains a pivotal challenge, which is substantially hindered by hardware adaptability and data efficacy.

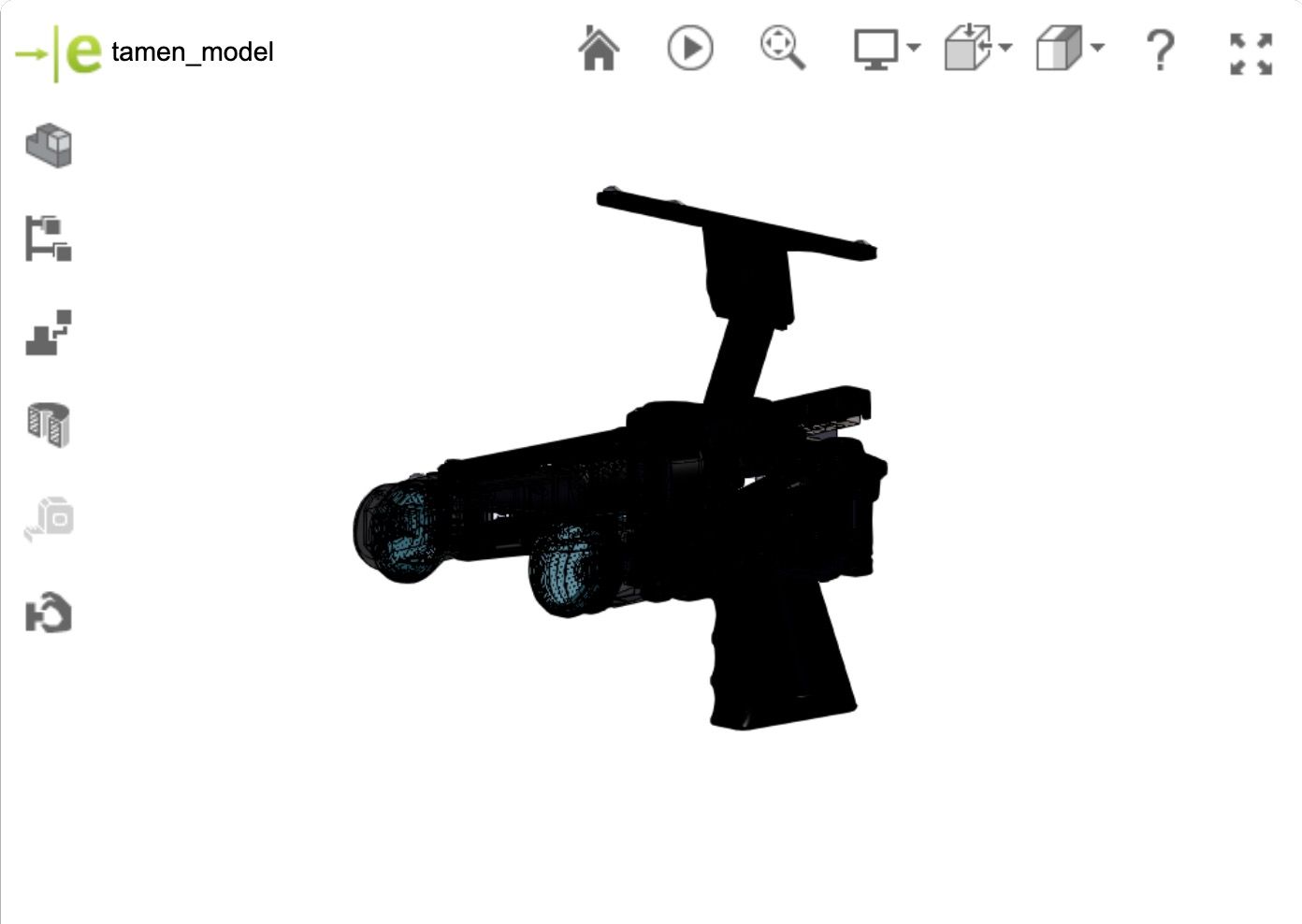

To bridge these gaps, we introduce TAMEn, a visuo-tactile data engine for bimanual contact-rich manipulation, which integrates hardware, acquisition strategy, and policy learning into a closed-loop framework.

- 1

A human-machine interface that supports a dual-mode pipeline with sub-millimeter MoCap and VR-based in-the-wild acquisition, and can rapidly adapt to heterogeneous grippers.

- 2

A data collection recipe that incorporates real-time validation during collection and organizes heterogeneous multimodal data into a pyramid-structured regime for staged learning.

- 3

A closed-loop data flywheel that leverages AR-based teleoperation with tactile feedback (tAmeR) to refine policies using corrective data from realistic failures.

To balance data quality and environmental diversity, we implement a dual-mode acquisition pipeline:

•A precision mode leveraging motion capture for high-fidelity demonstrations (sub-millimeter accuracy).

•A portable mode utilizing VR-based tracking for in-the-wild acquisition and AR-based tactile-visualized recovery teleoperation (tAmeR).

Dive into our 💡interactive 3D model viewer and explore the most popular native 3D formats with ease.

Try out the 🖱️move command to inspect internal structures.

It's more than just viewing — it's a hands-on exploration. Start 💫discovering now!

We evaluate the effectiveness of TAMEn system through a diverse set of contact-rich manipulation tasks. Experiments show that the proposed closed-loop visuo-tactile learning framework increases the average task success rate from 34% to 75% across diverse bimanual manipulation tasks.

The robot cooperatively manipulates a flexible sheet to lift the herbs and pour them into a target container. Successful execution requires stable bimanual coordination, careful handling of the deformable support, and precise control of tilting and release.

Ours-a (+Tactile +Pretrained)

Ours-B (+Tactile +Pretrained +DAgger)

Tactile pretraining and recovery data improve policy transfer across object variations and substantially improve robustness when visual perception is degraded, especially during contact-rich execution.

RobustnessCable Mounting(Full-Disturbance)

RobustnessCable Mounting(Post-Grasp Disturbance)

GeneralizationCable Mounting

GeneralizationBinder Clip Removal

GeneralizationHerbal Transfer

RobustnessHerbal Transfer(Post-Grasp Disturbance)

Visuo-tactile learning with tactile pretraining and DAgger significantly improves performance on unseen objects.

Tactile pretrain and DAgger improve robustness in contact-rich stages.

Introducing TAMEn, a Tactile-Aware Manipulation Engine for closed-loop data collection in contact-rich bimanual tasks, which builds upon the UMI paradigm with key enhancements in multimodality, precision-portability synergy, replayability, and data flywheel.

(a) Wearable visuo-tactile interface captures rich multimodal data while breaking the precision-portability trade-off through a dual-mode pipeline that fast switches between MoCap and VR-based tracking.

(b) Online feasibility checking ensures demonstrations are reliably replayable on robot. All data are unified into a pyramid for efficient staged learning across generalization, coordination, and failure recovery.

(c) tAmeR, our AR-based teleoperation system, helps collect recovery data with tactile feedback during policy execution and feeds them back into the pyramid for continuous policy refinement.