Towards the Era of Post-Training for Physical AI

(industrial CL simulation)

with Zero Disengagements

Gain in CL Perf.

Driving Logs

Towards the Era of Post-Training for Physical AI

Overview

World Engine does not scale the dataset.

It scales the difficulty.

The Problem

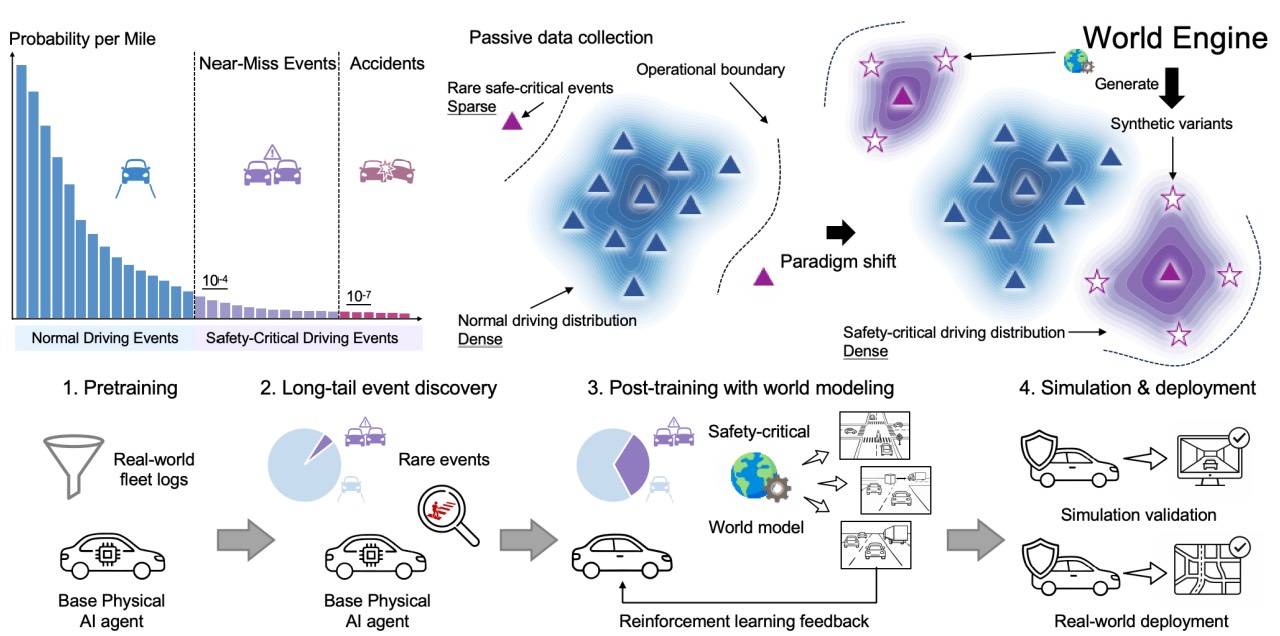

There is an unspoken consensus in autonomous driving: more data means safer models.

It is intuitive. It is reasonable. And for a long time, it shaped how the entire industry thought about progress.

But the dangerous moments that matter most — the split-second decisions that separate a safe vehicle from a tragic one — are vanishingly

rare in real-world driving logs. You can collect petabytes of highway cruising and urban commutes.

You will still be systematically underexposed to the edge cases that actually kill people.

"More data does not solve this. It dilutes it.

The real problem is not data volume — it is data distribution."

The Insight

What autonomous driving systems actually need is not more of the same — it is more of

what they have never seen. The dangerous scenarios that cause failures are dangerous

precisely because they are rare. No amount of routine driving data will naturally surface

them at the density required to learn from them.

This is where synthetic data enters, not as a shortcut, but as a necessity.

The Answer

If the real world will not generate enough critical scenarios on its own, construct them

deliberately. Synthesize the near-misses. Generate the adversarial cut-ins. Reconstruct

the intersection failures and run them ten thousand times with controlled variation.

The questions are: how do you synthesize data realistically enough to transfer?

How do you ensure the model trained on synthetic scenes actually performs better in the real world?

That is the problem World Engine was built to solve.

Method

We built World Engine to address this gap directly — not with a new architecture, not with a better backbone,

but with a new post-training paradigm.

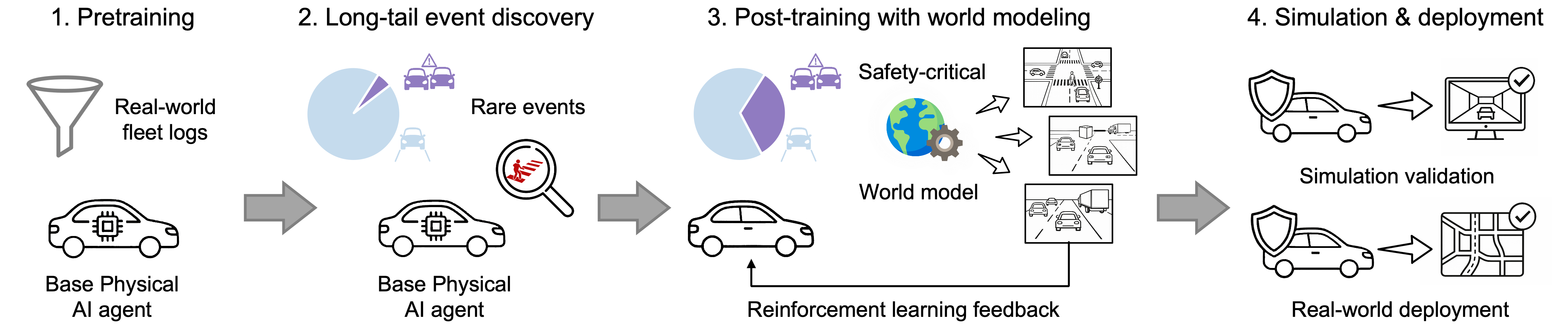

The framework has four stages, designed to work together as a closed loop.

This is not just data augmentation, it is targeted curriculum construction, driven by real failure signals, closed by reinforcement learning.

Identify where the deployed model fails — not in benchmarks, but in the training data, in the real world, in the specific scenarios where performance breaks down.

Use 3D Gaussian Splatting to rebuild those failure scenarios as high-fidelity neural environments. The real world becomes an editable substrate.

Generate adversarial variations of those critical scenes — harder, more diverse, more representative of the long tail. Create the training data that real-world driving will never naturally provide.

Apply reinforcement learning post-training on this targeted, synthesized data. Teach the model exactly what it was missing.

Fig. 1 — Conceptual pipeline of World Engine. Starting from real-world driving logs, long-tail events are discovered, reconstructed into photorealistic interactive environments, augmented with diverse traffic variations, and used to refine the policy via reinforcement post-training.

Fig. 2 — System overview. World Engine integrates a neural rendering simulation engine with a generative behaviour world model and RL-based post-training into a unified closed-loop framework for physical AI.

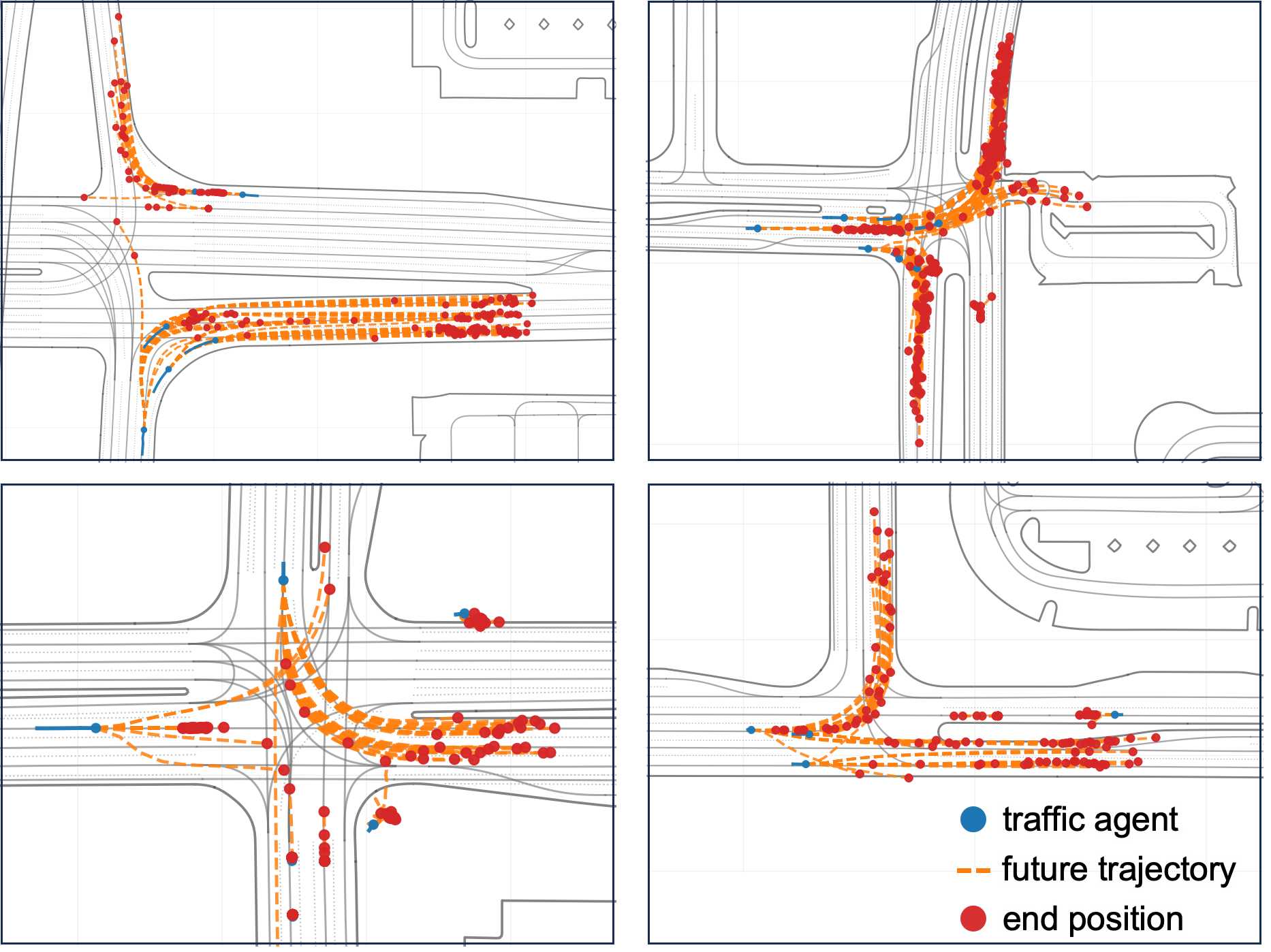

Fig. 3 — Behaviour world model. Left: Variants of safety-critical driving scenarios generated by World Engine through photorealistic sensor simulation. Right: Behavioural variations synthesized from a single long-tail scenario by the behaviour world model.

Behavioural variations synthesized from real long-tail events by the behaviour world model.

Photorealistic sensor observations rendered by World Engine's 3DGS simulation engine.

Results

The results validate the approach.

These are not benchmark numbers chased for their own sake.

They are the product of a system that was explicitly designed to find what the model does not know — and fix it.

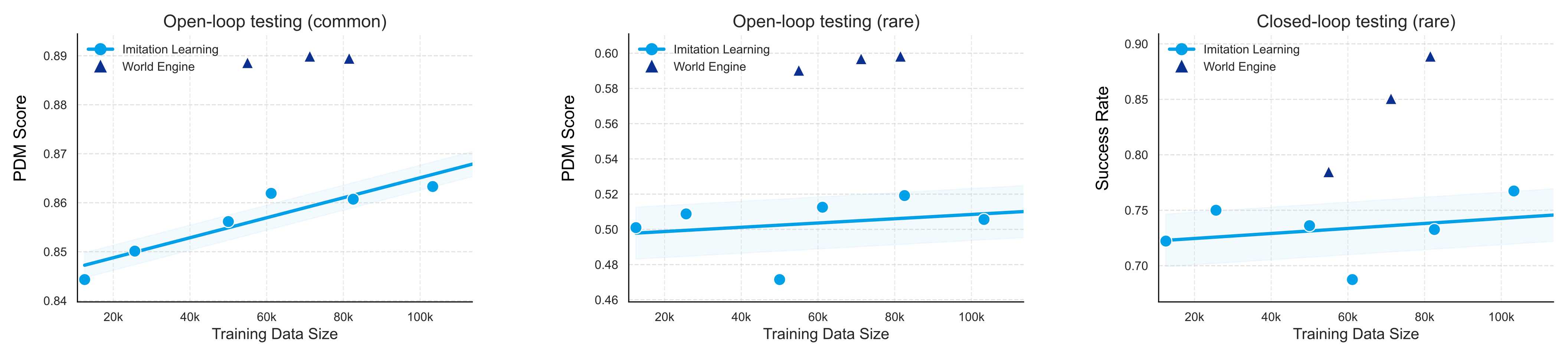

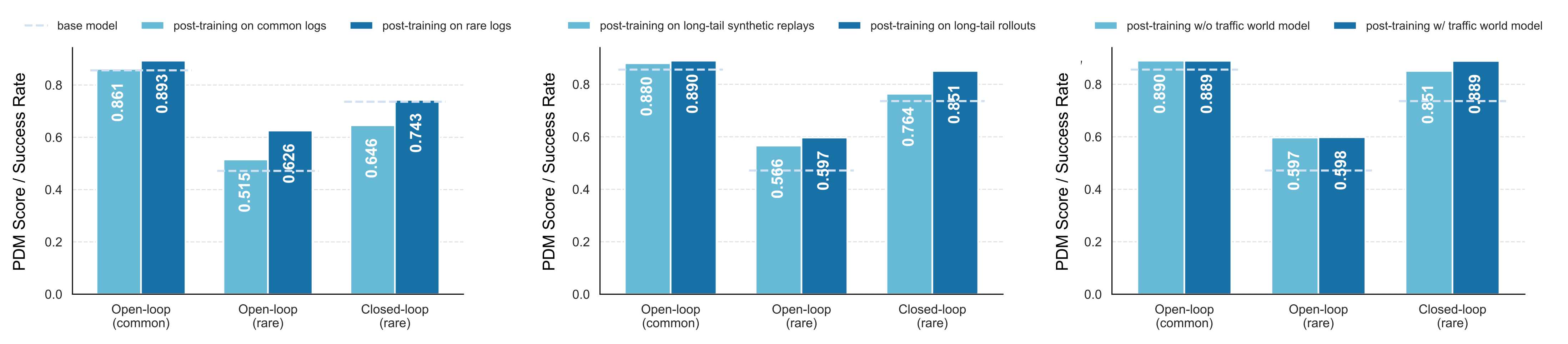

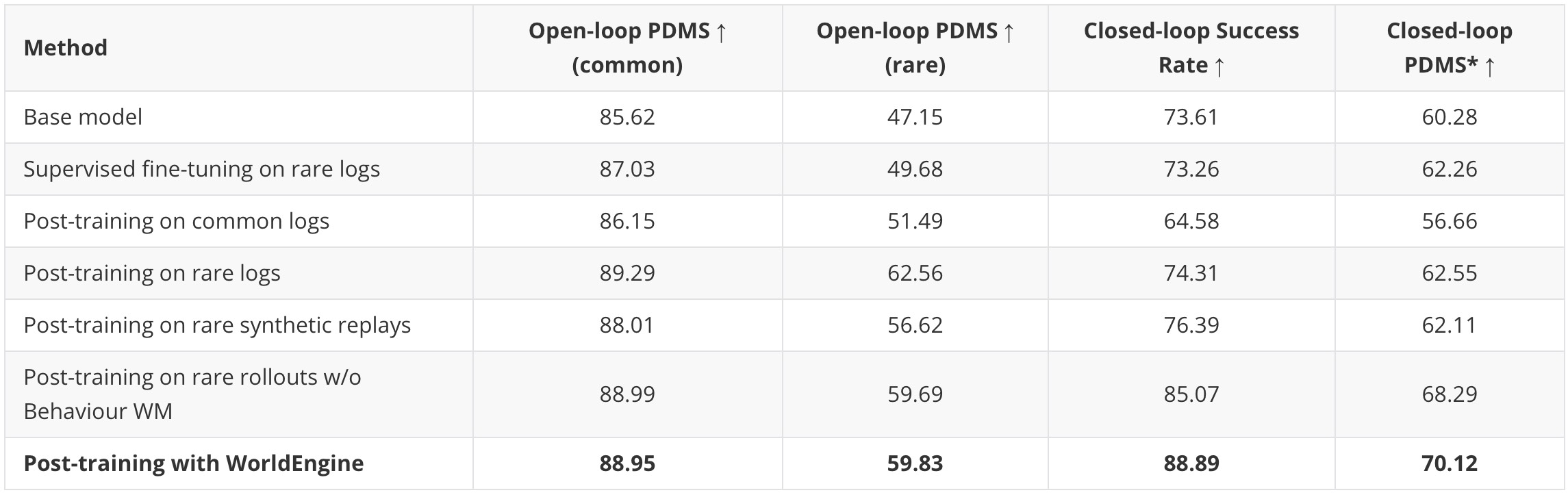

We evaluate on a curated set of safety-critical long-tail scenarios from the nuPlan test split,

using open-loop PDM Score and closed-loop success rate (4-second episodes; collision or off-road = failure).

Fig. 1 — Data scaling analysis. Performance on rare safety-critical cases saturates quickly as pre-training data scales. World Engine post-training starting from a 50k-scene base model delivers gains equivalent to ~14× more pre-training data, at a fraction of the cost.

Fig. 2 — Effectiveness of World Engine. Post-training with World Engine consistently improves closed-loop performance across configurations, confirming that targeted synthesis of safety-critical scenarios is the key driver of gains over the base model.

Fig. 3 — Base model vs. World Engine. Qualitative comparison on safety-critical nuPlan scenarios. The base model (FAIL) collides or leaves the road; the World Engine post-trained model (PASS) successfully navigates the same high-risk interactions across diverse scene types.

Table 1 — Full quantitative results across post-training configurations. Despite being optimized on long-tail cases, the post-trained agent maintains strong performance on common scenarios, confirming that safety-oriented adaptation does not compromise general driving competence.

Production-Scale Validation

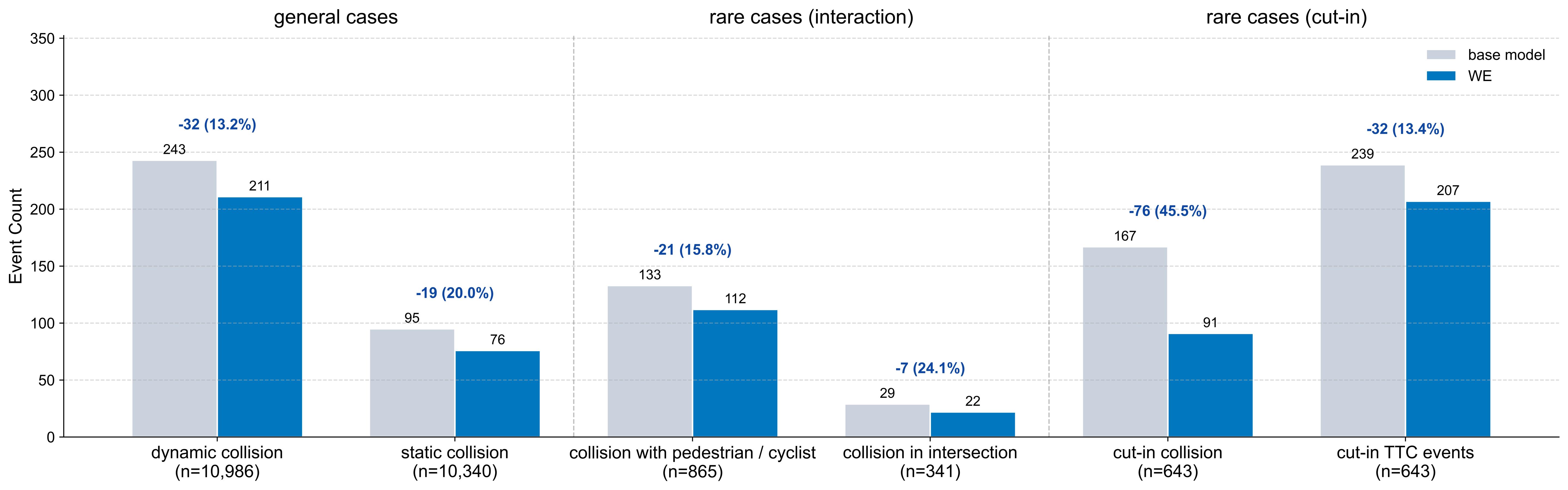

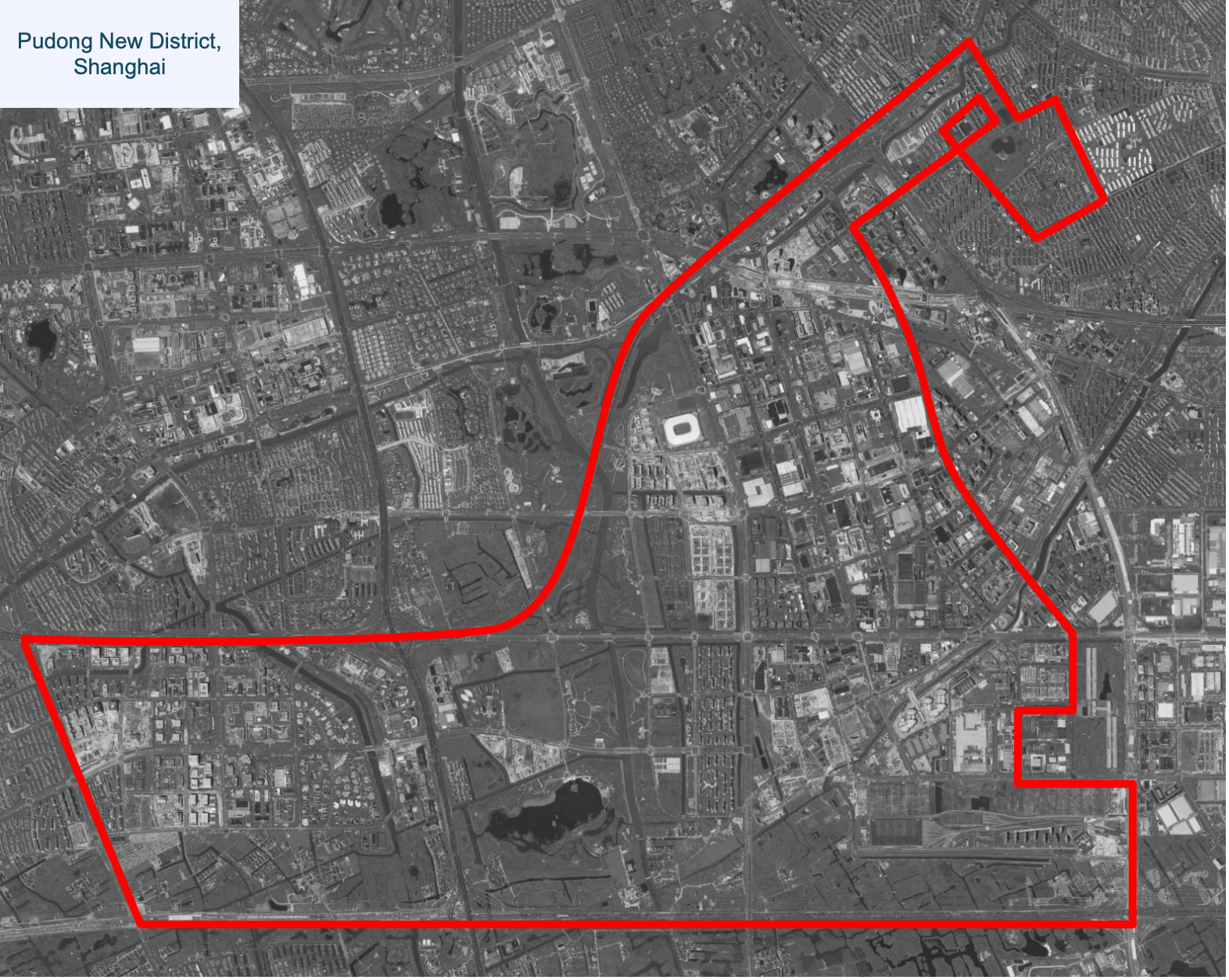

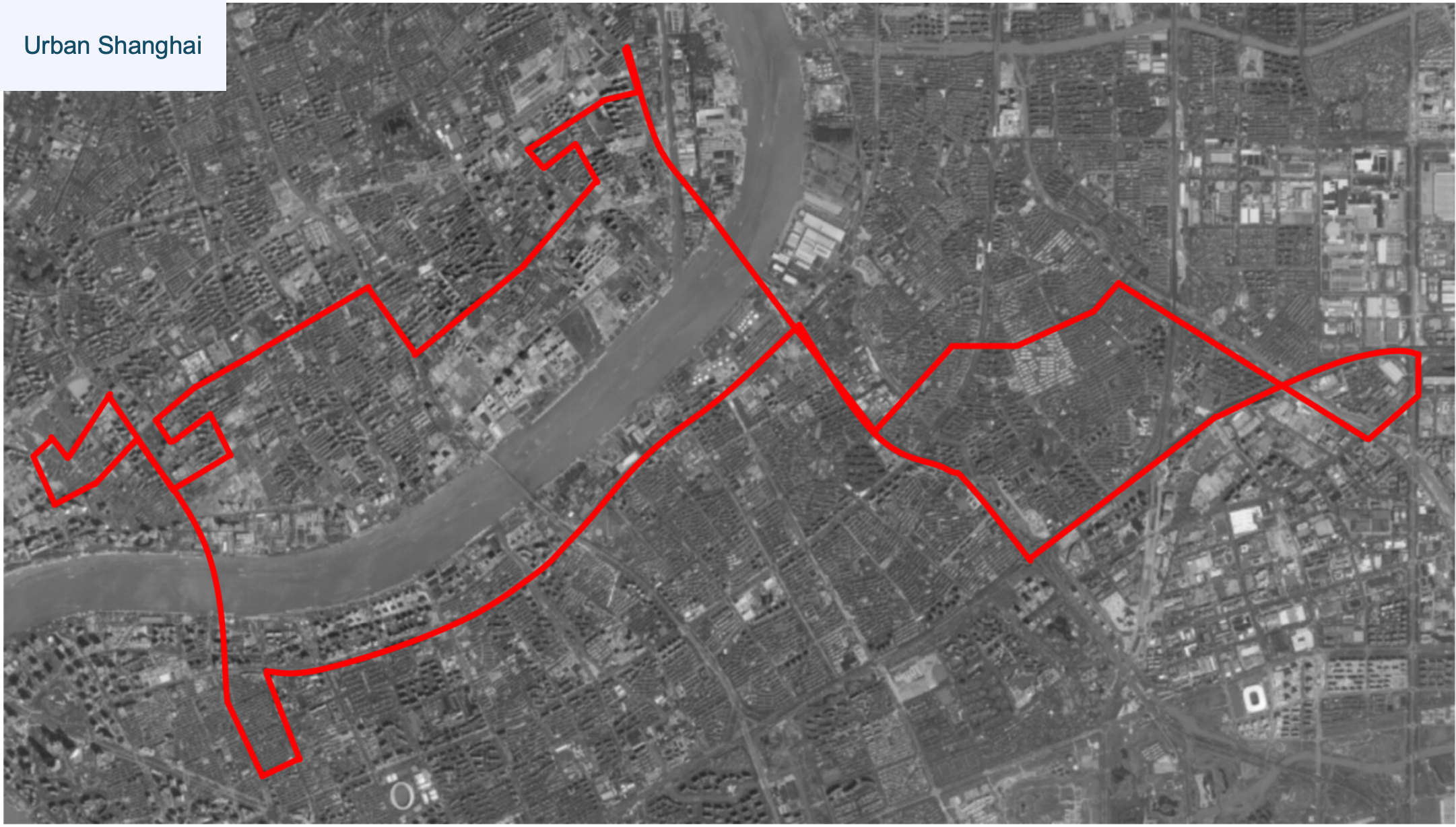

In industrial closed-loop simulation evaluations, collision rates dropped 45.5%. In real-world deployment, the model ran 200 km without a single disengagement. We validate World Engine on a production-scale autonomous driving system trained on over 80,000 hours of real-world driving logs, evaluated on an industry-grade closed-loop simulation platform with over 10,000 scenarios.

Fig. 1 — Production-scale closed-loop simulation results. World Engine post-training improves key safety metrics over the ADS base model, reducing collision rates by up to 45.5% across over 10,000 industry-grade simulation scenarios.

AITO M9 — the production vehicle used for real-world validation of World Engine post-training.

Route A — ~65 km urban motorways and elevated roads in Shanghai, evaluated in two daytime runs.

Route B — ~70 km urban and residential roads in Shanghai, evaluated in one nighttime run.

Broader Impact

We chose autonomous driving as the proving ground because the safety stakes are real, the failure modes are measurable, and the gap between simulation and deployment is well understood.

But the underlying problem — that training distributions systematically underrepresent consequential edge cases — is not specific to vehicles. It applies to any Physical AI system that must operate reliably in an open, uncontrolled world.

World Engine is a framework. The domain is autonomous driving. The problem it solves is universal.

Open Contribution

We are not proposing World Engine as the final answer. We are proposing it as a new path — one that the community can build on, stress-test, and extend.

If you work on E2E autonomous driving, Physical AI, or any system where the hardest moments are also the rarest — this work is for you.

Citation

If you find this work useful, please consider citing:

Coming Soon

If you find the Render Assets (MTGS) useful, please consider citing:

@article{li2025mtgs,

title={MTGS: Multi-Traversal Gaussian Splatting},

author={Li, Tianyu and Qiu, Yihang and Wu, Zhenhua and Lindstr{\"o}m, Carl and Su, Peng and Nie{\ss}ner, Matthias and Li, Hongyang},

journal={arXiv preprint arXiv:2503.12552},

year={2025}

}

If you use augmented scenarios data, please consider citing:

@inproceedings{zhou2025nexus,

title={Decoupled Diffusion Sparks Adaptive Scene Generation},

author={Zhou, Yunsong and Ye, Naisheng and Ljungbergh, William and Li, Tianyu and Yang, Jiazhi and Yang, Zetong and Zhu, Hongzi and Petersson, Christoffer and Li, Hongyang},

booktitle={ICCV},

year={2025}

}

@article{li2025optimization,

title={Optimization-Guided Diffusion for Interactive Scene Generation},

author={Li, Shihao and Ye, Naisheng and Li, Tianyu and Chitta, Kashyap and An, Tuo and Su, Peng and Wang, Boyang and Liu, Haiou and Lv, Chen and Li, Hongyang},

journal={arXiv preprint arXiv:2512.07661},

year={2025}

}

If you use the reinforced refinement method in WE, please consider citing:

@ARTICLE{11353028,

author={Liu, Haochen and Li, Tianyu and Yang, Haohan and Chen, Li and Wang, Caojun and Guo, Ke and Tian, Haochen and Li, Hongchen and Li, Hongyang and Lv, Chen},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

title={Reinforced Refinement With Self-Aware Expansion for End-to-End Autonomous Driving},

year={2026},

volume={48},

number={5},

pages={5774-5792},

keywords={Adaptation models;Self-aware;Autonomous vehicles;Pipelines;Planning;Training;Reinforcement learning;Uncertainty;Data models;Safety;End-to-end autonomous driving;reinforced finetuning;imitation learning;motion planning},

doi={10.1109/TPAMI.2026.3653866}

}

If you find data scaling infos helpful, please consider citing:

@article{tian2025simscale,

title={SimScale: Learning to Drive via Real-World Simulation at Scale},

author={Haochen Tian and Tianyu Li and Haochen Liu and Jiazhi Yang and Yihang Qiu and Guang Li and Junli Wang and Yinfeng Gao and Zhang Zhang and Liang Wang and Hangjun Ye and Tieniu Tan and Long Chen and Hongyang Li},

journal={arXiv preprint arXiv:2511.23369},

year={2025}

}